Facebook has apologised for the issues surrounding the online social network's content moderation that were highlighted in a recent Channel 4 documentary.

Representatives from the tech giant came before the Oireachtas Communications Committee to answer questions about the programme's content moderation, much of which is done in Dublin.

Over a six-week period earlier this year, an undercover reporter from Channel 4's Dispatches programme worked as content moderator at the offices of Facebook's Dublin-based training partner, CPL Resources.

The programme revealed weaknesses in Facebook's content moderation. It showed how graphic and disturbing material was allowed to remain online.

Representatives of the social media giant apologised for the failings highlighted in that programme.

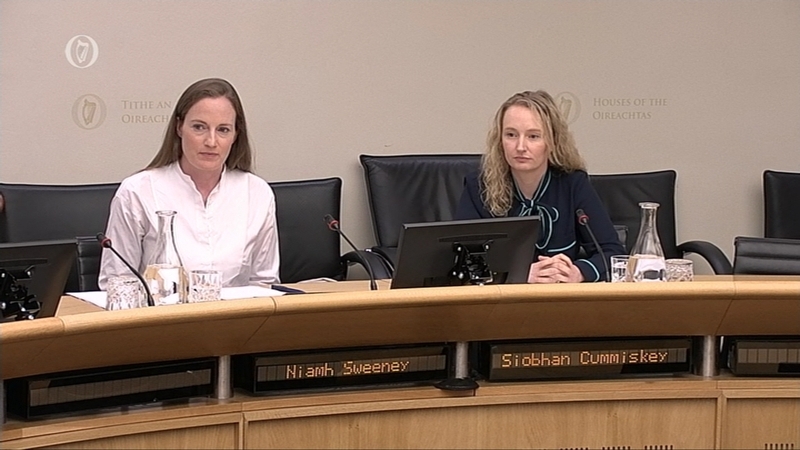

Niamh Sweeney, the Head of Public Policy for Facebook Ireland, and Siobhán Cummiskey who is Facebook's Head of Content Policy for Europe, the Middle East and Africa appeared before the committee.

Ms Sweeney acknowledged the upset and concern caused by what she called the "deeply disturbing" programme.

She said: "We know that many of you who watched the Dispatches programme were upset and concerned by what you saw. Siobhán and I - along with our colleagues here in Dublin and around the world - were also upset by what came to light in the programme."

WATCH: @facebook Niamh Sweeney, head of Public Policy for Facebook Ireland, apologises for content moderation issues raised in @C4Dispatches programme & disagrees FB is turning a blind eye to problematic content is in the company's interests pic.twitter.com/BpR3rhnfF2

— RTÉ Politics (@rtepolitics) August 1, 2018

The company stressed that the safety and security of its users is a "top priority". An internal investigation is under way in light of the failings highlighted in the programme.

Ms Sweeney said: "We have created policies, tools and a reporting infrastructure that are designed to protect all of our users, especially those who are most vulnerable to attacks online - children, migrants, ethnic minorities, those at risk from suicide and self-harm and others.

"So, it was deeply disturbing for all of us who work hard on these issues to watch the footage that was captured on camera, at our content review centre here in Dublin, as much of it did not accurately reflect Facebook's policies or values," she added.

She said Facebook is "one of the most heavily scrutinised companies in the world. It is right that we are held to high standards. We also hold ourselves to those high standards."

She added: "Dispatches identified some areas where we have failed, and Siobhán and I are here today to reiterate our apology for those failings. We should not be in this position and we want to reassure you that whenever failings are brought to our attention, we are committed to taking them seriously, addressing them in as swift and comprehensive a manner as possible, and making sure that we do better in future."

We need your consent to load this rte-player contentWe use rte-player to manage extra content that can set cookies on your device and collect data about your activity. Please review their details and accept them to load the content.Manage Preferences

Ms Sweeney said that Facebook strongly disagrees with any suggestion that turning a blind eye to problematic content is in the company's interests.

"But first I want to address one of the claims that was made in the programme, that is the suggestion that it is in our interests to turn a blind eye to controversial or disturbing content on our platform. This is categorically untrue," she said.

"Creating a safe environment where people from all over the world can share and connect is core to our business model. If our services are not safe, people won't share content with each other and, over time, would stop using them. Nor do advertisers want their brands associated with disturbing or problematic content, and advertising is Facebook's main source of revenue."

The company has also pledged to make changes to its training of content moderation teams.

Ugly and disturbing material is the "cocaine" which attracts users to continue using the Facebook platform, according to Fianna Fáil TD Timmy Dooley.

Mr Dooley was particularly critical of a 2016 leaked internal memo from Facebook executive Andrew Bosworth about the company's growth tactics.

Fianna Fáil TD @timmydooley attacks @facebook over a 2016 internal memo from executive @boztank Andrew Bosworth. Niamh Sweeney, Head of Public Policy for Facebook Ireland responds pic.twitter.com/QhzvjAXGKB

— RTÉ Politics (@rtepolitics) August 1, 2018

The Fianna Fáil communications spokesperson said: "Somebody as senior as him setting out the policies that worked with your company. In other words material, even if it is ugly, disturbing, bullying, and its death and destruction and bullying of children. It's de facto good because it is effectively the cocaine which attracts users to this material."

He disagreed with Ms Sweeney that such material is "off-putting for people" and damaging to Facebook.

He added: "I put it to you that it is quite the opposite. That this is the kind of material that attracts lots of eyeballs. Makes people for sure outraged but in their outrage, they copy, they paste and they share."

Responding, Ms Sweeney said "a lot of us would like to go back and hit delete" before Mr Bosworth sent out his statement.

"His views as expressed in that post, absolutely do not represent the views of the company. We would never stand over them and it was taken up with him at the highest level by Mr Mark Zuckerberg. 'Boz' as he is known within the company has a reputation for posting provocative things for getting a conversation going. That is my understanding of what happened here."

Earlier, Sinn Féin's communications spokesperson Brian Stanley raised concerns about a decision not to remove a video depicting a three-year-old child being physically assaulted by an adult.

Ms Sweeney said it was a mistake "because we know that the child and the perpetrator were both identified in 2012. The video should have been removed from the platform at the time."

The video was removed as soon as Channel 4's Dispatches brought it to the social media company's attention.

Mr Stanley said the image of a child being abused should have removed immediately.

Ms Sweeney admitted this demonstrates "one of the gaps in our system". She added: "I can't defend that and I won't defend that. It should not have been up for six years."

Ms Cummiskey added: "We take a zero tolerance approach to child sex abuse content."

She said that such content is deleted and the company uses special image matching technology to make sure the content is not uploaded again.

Committee Chairperson Hildegarde Naughton said that law enforcement bodies such as An Garda Síochána should be the first point of contact when such material is detected.

Ms Cummiskey agreed and said "that is a fair point."

Independent TD Michael Lowry pointed out that there is now a fear that Facebook is a "big global entity" that could run out of control.

He claimed: "It has become a tool of abuse and it is a threat to society."

He asked the Facebook representatives: "Would you accept the sheer numbers and the level of diverse activity on Facebook, and other social platforms, is making it impossible for you to control and self-regulate?"

Ms Sweeney pointed out that the vast majority of people who use the Facebook service do so to connect with their friends and family and do not encounter the type of content that being discussed at the committee today.

She said it is important to note that most of the two billion people who use Facebook do not encounter issues or raise complaints.

Following today's meeting, committee chairperson Hildegarde Naughton said social media cannot regulate itself any longer.

Deputy Naughton said: "Social media platforms have shown themselves incapable of self-regulation. If they won't regulate themselves, we must do it for them."

She also said she would seek a meeting with the European Commissioner for the Digital Economy and Society, Mariya Ivanova Gabriel, to discuss the need for European Regulation in this area.

Deputy Naughton added: "As Facebook's European Headquarters is based in Ireland, we must lead the way. The press are regulated. Television and radio is regulated. It is the view of the committee that the time for self-regulation by social media platforms is over."

"We are not planning to turn back the clock or ban social media. What we are proposing is to regulate to ensure that such platforms are safe places for its users," Deputy Naughton said.