Opinion: just what exactly is Artificial Intelligence and why is it so important? A primer on how AI now rules everything around us

In the beginning

The first official use of the term Artificial Intelligence (AI) was in the proposal for the 1956 Dartmouth Summer Research Project on Artificial Intelligence. That six week workshop marked the birth of the field of study of AI and the organisers - John McCarthy, Marvin Minsky, Nathaniel Rochester and Claude Shannon - and conference attendees led the way for many years.

At the beginning, the focus was on developing computational systems that had the capacity for the human abilities that are traditionally associated with intelligence. These included language use, mathematics and self-improvement on tasks through experience (learning) and planning (for example in games such as chess).

The Turing test

One of the challenges has always been to develop an operational definition of what intelligence is. Without this definition, it is impossible for AI researchers to determine whether they have succeeded in creating an intelligent system or not.

Nearly all of the recent breakthroughs in image, speech and language processing, autonomous cars, and computer games are based on deep learning

In 1950, Alan Turing proposed a test for intelligence, now known as the Turing test. Here, a human evaluator poses a sequence of questions to two respondents (A and B). The evaluator knows that one of the respondents is a machine and the other a human, but does not know which is which.

If at the end of the sequence of questions, the evaluator is not able to distinguish between the machine and the human based on the responses to the questions then the machine has passed the test and is considered to be intelligent. The test essentially boils down to the claim that if a machine acts intelligently then it is intelligent.

We need your consent to load this rte-player contentWe use rte-player to manage extra content that can set cookies on your device and collect data about your activity. Please review their details and accept them to load the content.Manage Preferences

There have been a number of criticisms of the Turing test over the years. Philosopher John Searle makes a distinction between weak AI, which claims that systems only act as if they can think, and strong AI where the claim is that the system can actually think (not just simulate thinking). Most AI researchers today adopt the weak AI position and don’t worry about whether they are actually creating intelligence or not.

Easy for humans, hard for computers

There were many initial breakthroughs in AI research, including some in general purpose methods for logical reasoning and theorem proving. Terry Winograd’s SHRDLU system was able to react to natural language commands, while the Shakey robot project at Stanford showed that it was possible to link symbolic reasoning and physical actions.

But the enthusiasm generated by these initial breakthroughs faded over the years as these successes proved difficult to translate and scale into the real world. In fact, the main lesson learnt from early research in AI is that it is very hard for computers to do what is easy for humans. "It is comparatively easy to make computers exhibit adult level performance on intelligence tests or playing checkers, and difficult or impossible to give them the skills of a one-year-old when it comes to perception and mobility’’, wrote oboticist Hans Moravec in 1988's "Mind Children" (Harvard University Press).

Solving the paradox

The response by the AI community to Moravec’s paradox and the challenge of scaling AI systems to the real world has been multi-faceted. Some researchers have expanded their conceptualisation of intelligence beyond the traditional view to include things such as perception, memory, self-regulation, emotion and locomotion. This research is often described as embodied cognition as it assumes that the cognitive abilities of an organism are closely shaped by and dependent on the whole body of the organism and not just the brain. This strain of AI research is the type of research most likely to result in autonomous intelligent robots such as those that appear in the movies.

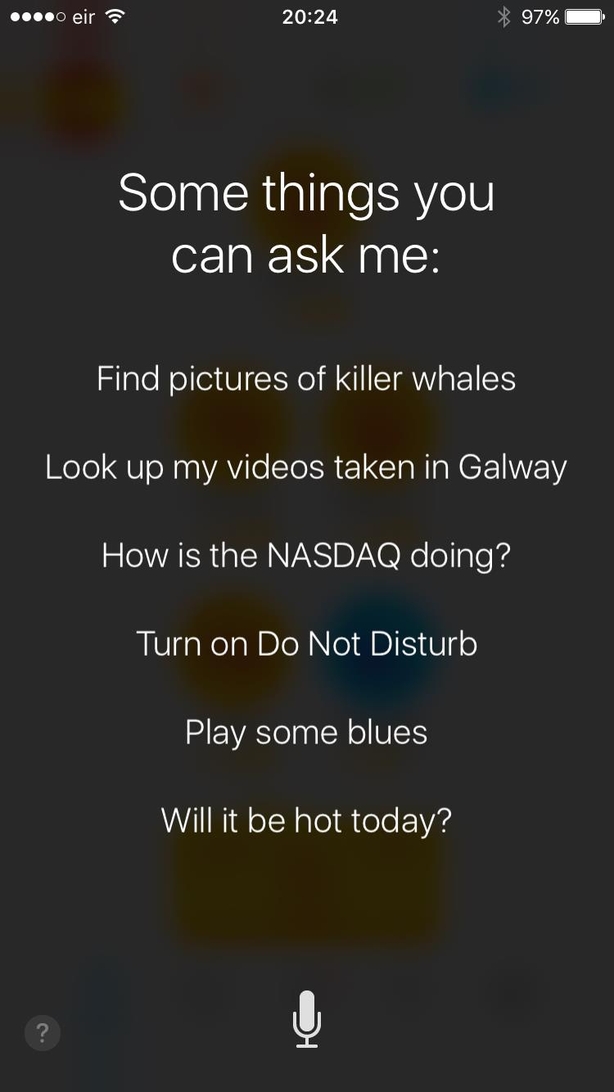

A different response is to focus on developing systems that are designed to be experts in a specific and well-defined task or domain. These types of AI systems are already common-place in developed societies and will continue to proliferate over the coming years. The Roomba robot hoover, the Google search engine, the Amazon Recommender system, the SIRI iPhone interface, spam filters and machine translation systems all include AI. However, they are designed for a specific task and they would be useless on other tasks so they are best viewed as experts in specific domains rather than having the general and flexible intelligence that we humans take for granted.

The advent of deep learning

Modern AI research focuses on using large datasets and machine learning to get the computer to learn the appropriate rules for a domain. The field of deep learning is very much at the core of this type of data driven/machine learning AI. Nearly all of the recent headlines relating to AI breakthroughs in image, speech and language processing, autonomous cars, and computer games are based on deep learning.

The likelihood of full artificial intelligence coming into existence in the near future is very small.

The field of machine learning is focused on developing algorithms that enable a computer to extract patterns from large datasets and to generate models that implement these patterns. The most common type of pattern that a machine learning algorithm will extract is a mapping from a set of input features to an output feature. In mathematics, the concept of a function describes a mapping from a set of inputs to an output and machine learning can be understood as learning functions from data.

For example, a spam filter implements a function that maps from a set of features in an email (such as the words in the email or the sender’s address etc) to a label spam or not spam. Face recognition software implements a function that maps from a set of feature in an image (such as the lines and pixels colours in the image) to a label describing whether a pixel is within the bounding box of a face or not.

We need your consent to load this rte-player contentWe use rte-player to manage extra content that can set cookies on your device and collect data about your activity. Please review their details and accept them to load the content.Manage Preferences

In many ways, the idea of a function, this mapping from inputs to outputs, is a better way of thinking of modern AI than a robot that engage with us fluently and naturally through language. Understanding that the idea of a function is at the core of modern AI helps us to understand that many of the intelligent systems we use today are limited to the specific task they are designed to handle.

The future

Prof. Stephen Hawking has warned that the creation of full artificial intelligence could threaten the existence of humanity. While Hawking is correct in this view, the likelihood of full artificial intelligence coming into existence in the near future is very small.

A more imminent challenge posed by AI for modern societies is deciding how much autonomy we wish to invest in these systems. Already functions learned from data directly affect our lives on a daily basis in a myriad of ways. A learned function may map your profile to a higher car or health insurance premium or to a higher price on an online store or onto a no-fly list. In fact, AI systems are currently being used in several cities around the world to decide where police should patrol and support parole and sentencing decisions in some jurisdictions.

Frequently an argument is made that these systems are objective and fairer because the decision making processes are learned from data. The problem with this reasoning is that AI systems are amoral and simply extract the patterns in the data rather than being objective. Consequently, the system will replicate and reinforce that society’s prejudices unless tremendous care is taken in the design and sampling of the data sets. These are worrying trends and we need to be aware of these changes in our society.

However, AI also promises many benefits because it has the potential to help us make better decisions in areas from medicine to transport to business. The AI genie is already out of the bottle, but we need to be careful about what we wish for.

The views expressed here are those of the author and do not represent or reflect the views of RTÉ