Analysis: emotion recognition technologies currently show low levels of accuracy so there's a way to go before they can read your emotions

A widespread application of machine learning algorithms is to track people's identity. Just as identity can be recognised through the recording of faces, it is claimed that emotions can be recognised too, though emotion recognition algorithms are not used for global surveillance yet.

Since the development of the first commercial algorithm Facereader in 2005, they have been mainly focused on the validation of marketing content such as TV ads, product packaging or even food taste. With the multiplication of devices able to record peoples’ faces, and with the continuous improvements in the processing power of computers, emotion recognition technologies have found new real-life applications in the automotive industry and, perhaps surprisingly, in job recruitment technologies.

We need your consent to load this rte-player contentWe use rte-player to manage extra content that can set cookies on your device and collect data about your activity. Please review their details and accept them to load the content.Manage Preferences

From RTÉ 2fm's Dave Fanning Show, China analyst Clifford Coonan discusses the country's use of emotion recognition technology

But the performance and accuracy of emotion recognition technologies based on facial expression have come under close scrutiny by AI watchdog, the AI Now Institute. In January 2021, HireVue abandoned emotion recognition technologies as part of their AI recruitment assessment product. The reason for this decision may be related to the growth in research which is invalidating the assumptions about the link between facial expressions and emotions.

Since the 1970s, the most common paradigm in psychology about emotions stated three principles: (i) there is a direct relationship between an emotion felt and its facial expression, (ii) there are six basic emotions and each has a prototypical expression, and (iii) emotions and their expressions are universal. These assumptions were also conveyed in popular culture like in the Disney Pixar movie Inside Out (2015) or in the TV show Lie to Me (2009-2011) and were commonly accepted by companies and organisations.

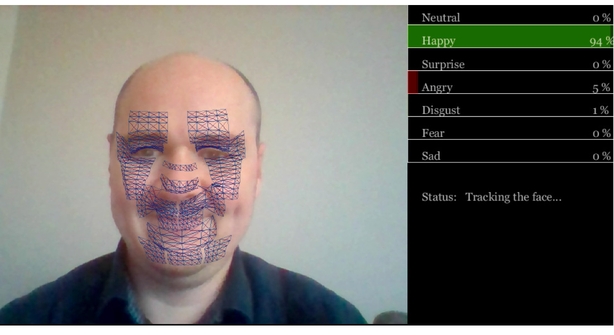

They were also ideal for the use of machine learning algorithms. Following this model, algorithms claim to identify the emotion felt by someone by categorising facial expressions as one of six basics emotions, and applying the algorithm to everyone without distinction of culture or origin.

From TEDxBerlin, Daniel McDuff on machine learning tools which enable the recognition and analysis of emotions

In addition to the hundreds of academic algorithms, an exponential number of tech companies are providing commercial algorithms to infer emotions from facial expressions. However, the largest study to date (led by DCU with collaborators from Queen's University Belfast, University College London and University of Bremen), shows that results from these technologies are significantly less accurate than human observers.

In this study, eight commercially available algorithms were compared with human observers in the recognition of 937 videos supposedly expressing an emotion. There was considerable variance in recognition accuracy among the eight algorithms, and their classification accuracy was consistently lower than the observers’ accuracy.

I conducted another study which also revealed that the accuracy is different according to the type of emotion as well. These findings indicate potential shortcomings of existing algorithms for identifying emotions compared to human observers.

The accuracy of algorithms is not the main problem here; the fundamental principle of the relationship between emotions and facial expressions is also called into question. Along with colleagues at the Université Grenoble-Alpes, we have recorded 232 participants watching videos and collected their reported feelings while watching the video. The recordings of their facial expressions were shown to human observers and were processed by a commercial algorithm designed by one of the leaders in the domain of emotion recognition technologies.

The results revealed that the accuracy of the algorithm in identifying the emotion felt from facial expressions was very low, as was the accuracy of human observers. In fact, it appears that there is no scientific evidence showing a reliable correlation between the emotion felt and facial expressions. This tends to indicate that all assumptions upon which emotion recognition technologies were built, are now invalid.

Despite these criticisms, there are some emerging solutions to the frailties in emotion recognition technologies. With the multiplication of devices available, recognition technologies will use not only the face, but also the entire body, and the context in which the expression is produced, to accurately infer emotions.

The views expressed here are those of the author and do not represent or reflect the views of RTÉ