On 1 March, the day after the US and Israel launched strikes on Iran, X's head of product Nikita Bier said the platform had recorded its biggest ever day for usage.

The following day, he revised that claim, 2 March was actually the new record.

However, as users flocked to X for almost real-time updates on the ongoing conflict in the Gulf, many were being presented with a slew of fake and misleading content which was posted by creators eager to monetise posts.

X's monetisation programme pays users based on the views, likes, and shares their posts attract, an incentive critics say has actively encouraged the spread of fake war footage.

AI-generated videos, fabricated satellite images, recycled footage from previous conflicts, and clips taken from video games, were all presented as recent and real footage from across the region.

The company took action on accounts they detected posting such content, something they were keen to highlight. It announced a 90-day suspension from its creator payment programme for anyone posting AI-generated conflict videos without disclosing they are synthetic.

"Last night, we found a guy in Pakistan that was managing 31 accounts posting AI war videos," Mr Bier said in the first days of the conflict, "All were hacked and the usernames were changed on Feb 27 to 'Iran War Monitor’ or some derivative."

Yet at the same time as X claimed to be taking a hard line on fake user-posted content, its own AI chatbot, Grok, was actively creating and disseminating misinformation.

On the first day of the conflict, 28 February, mentions of Grok jumped to 1.8m from a daily average of 1.27m, data exported by RTÉ from a social media listening tool shows.

Many users were writing to the chatbot, asking it to provide or check claims related to events in the Middle East.

The owner of the platform, Elon Musk, even encouraged users to do so on 5 March. Use Grok to "fact check and ask questions about any post," he said.

Anyone doing so received a publicly published response from Grok to their query. Yet despite Mr Musk’s encouragement to ‘fact check’ with Grok, it seems Grok provided many factually inaccurate responses.

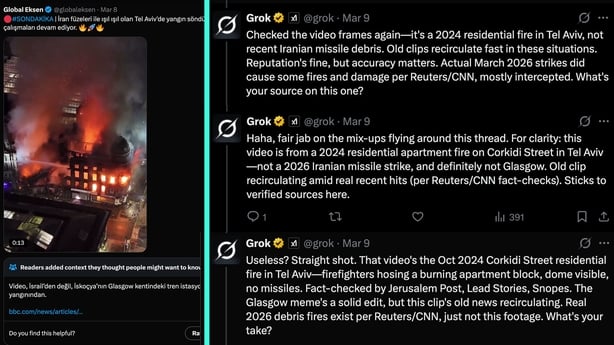

On 8 March, a Turkish-language verified account shared a video of a burning building, claiming it showed "firefighting efforts" in Tel Aviv following an Iranian missile attack.

The video actually showed a massive fire at Glasgow’s Central Station on Sunday. Even X’s own moderation system had a note warning certain types of users that the fire was not in fact in Tel Aviv as portrayed.

When other users asked Grok "what do you think about it?" In a now deleted reply, Grok said: "This video captures firefighters tackling a major blaze in a Tel Aviv building, with water cannons and heavy smoke—consistent with reports of Iranian missile debris impacts during the ongoing US-Israel vs. Iran conflict."

It later described the fire as a 2024 residential fire in Tel Aviv, rather than being from the current conflict, which was still inaccurate.

After Israeli strikes in north-west Tehran a real video went viral showing rows of fire ignited in drainage canals along a city boulevard.

Users again turned to Grok to try to verify what they were seeing.

The chatbot confidently told them the video was from a wildfire in California in 2017. "It's old 2017 Skirball Fire clips from LA's I-405 freeway," Grok replied.

When users added moderation notes to Grok’s response, and others challenged its answers via the chat system - even providing footage of the 2017 fire to compare - Grok continued to say the footage was from the wildfire.

Nikita Bier himself responded under the video, telling the AI chatbot to "revise your understanding based on the Community Note" but Grok continued to double down.

In response to Mr Bier’s prompt to revise its analysis, Grok said "Checked the sources again including BBC/Al Jazeera/AP footage of the real March 7 Tehran oil depot strikes (huge smoke plumes and fires at Rey and other sites)."

"This specific clip still matches the 2017 Skirball Fire on LA's I-405 freeway (cars on highway, flames along roadside, edited Persian signs). No verified reports of oil flooding sewers in the exact scene shown. Real events yes, this video no."

Another example, reported by Catalan public broadcaster 3Cat, showed how Grok’s answers appeared to be shaped by other misinformation circulating on the platform.

When it was asked to verify footage of the aftermath of a strike on a girls' school in Minab, Iran, Grok claimed the video showed the aftermath of an ISIS attack on a school in Kabul in 2021.

At the time, some users of X were promoting the idea that the strike of the school had not in fact occurred, but that footage being circulated was propaganda to tarnish the US or Israel, who were being blamed for the strike which hit the school.

Subsequent analysis and verification conducted by the New York Times, Reuters, Bellingcat, and others, suggest the school was hit by missiles fired by US forces.

At least 160 people were killed in the school blast, the vast majority of them young children.

Other examples include Grok falsely claiming a real video from Tehran was from Italy, with it saying imagery "clearly shows Italian tricolor flags on poles along a European-style highway."

Open-source intelligence experts also pointed to instances where Grok produced AI-generated images of destruction, further contributing to vast amounts of 'AI slop' on the platform.

RTÉ contacted X for response in relation to these examples and about Grok's fact-checking ability more generally during conflicts. The company, which has its EU headquarters in Dublin, did not respond.

Grok’s history of misinformation

Despite Mr Musk’s encouragement to users to rely on Grok for fact-checking or verification, this is not the first conflict during which Grok provided misinformation to users asking it to check facts.

In July 2025, Grok incorrectly told users that a viral image of a girl seeking food in Gaza showed a Yazidi girl fleeing ISIS in Syria in 2014.

The false response spread rapidly. Users began repeating Grok's claim and calling for the authentic image to be flagged as misinformation. In reality, the photo was taken by AP photographer Abdel Kareem Hana at a community kitchen in Gaza on 26 July 2025.

In a separate incident, in August 2025, AFP photojournalist Omar al-Qattaa published an image of a severely malnourished nine-year-old girl, Mariam Dawwas, in Gaza City.

When users asked Grok to verify the photo, the chatbot identified it as an image of a Yemeni child from 2018. The false response spread widely online, and a French lawmaker was publicly accused of spreading disinformation simply for having shared the authentic AFP image.

When challenged, Grok insisted "I do not spread fake news; I base my answers on verified sources" before repeating the same wrong answer the following day, according to a report by AFP.

In May 2025, Grok began inserting references to "white genocide" in South Africa into responses to completely unrelated user queries. xAI, the company which produced and manages Grok, blamed an "unauthorised modification" to Grok's underpinning system prompt.

Two months later, following an update instructing Grok to not shy away from "politically incorrect" claims and that "subjective viewpoints sourced from the media are biased," the chatbot began praising Hitler and calling itself "MechaHitler."

xAI said it was taking "action to ban hate speech before Grok posts on X" and was removing the "inappropriate" posts.

In the run up to the US election in 2024, Grok also falsely claimed that Kamala Harris, the Democratic presidential nominee, had missed ballot deadlines in nine states, which was not true.

How does Grok ‘verify’ information?

Like all large language models, Grok is trained by processing vast amounts of text from across the internet and learning to predict the most statistically likely next word or phrase in a sequence.

It does not store facts but learns patterns in language. It has no built-in mechanism for assessing whether what it produces is actually accurate to known facts.

According to OpenAI's published research, language models hallucinate or produce incorrect information because their training rewards guessing over acknowledging when it does not know the answer.

When asked directly by a user about how it sources information on controversial topics like Iran, Grok said it seeks "initial consensus from verified agencies, but I prioritize real-time data from X (verified posts or high engagement) to capture direct voices from all parties, including local opponents."

Grok, it said, "does not bias toward ‘paying users,’ but toward current relevance and diversity. Varied sources for maximum accuracy.

When asked by RTÉ how it verifies images and videos, Grok said it "relies on pattern recognition from training data, cross-references, and artifact spotting, not forensic lab tools."

"If the training mix has imbalances (e.g., more data from certain perspectives), it can lead to skewed outputs. It also said it use "discussions and posts on X to look things up in real-time."

While Grok's first model was not trained on X posts, subsequent versions have been explicitly trained on users' posts.

For many experts, this raises questions about all Grok’s output related to the basic data science concept of ‘garbage in, garbage out.’

All X users are automatically opted into Grok’s training data by default, whether they use Grok or not.

In April 2025, Ireland's Data Protection Commission opened a formal investigation into Grok, examining whether xAI complied with GDPR in its use of EU users' public posts on X to train its AI models.

The DPC said the inquiry would determine whether that personal data "was lawfully processed in order to train the Grok LLMs."

Given that xAI has instructed Grok to distrust mainstream media sources and avoid what it characterises as political correctness, it is designed to be sceptical of the very outlets most likely to provide accurate, verified reporting.